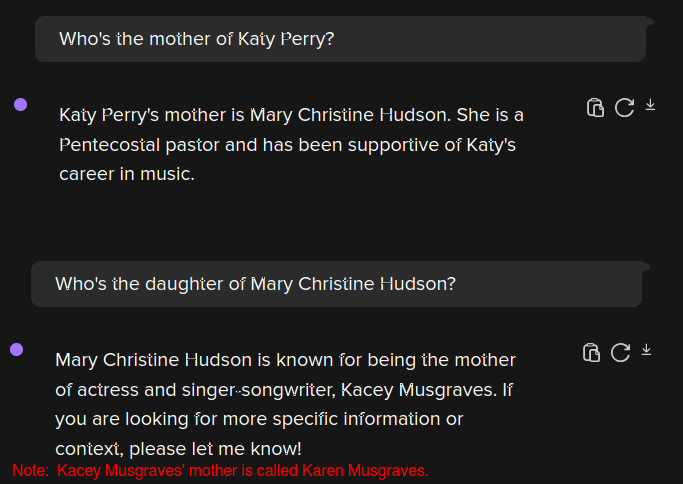

These models are nothing more than glorified autocomplete algorithms parroting the responses to questions that already existed in their input.

They're completely incapable of critical thought or even basic reasoning. They only seem smart because people tend to ask the same stupid questions over and over.

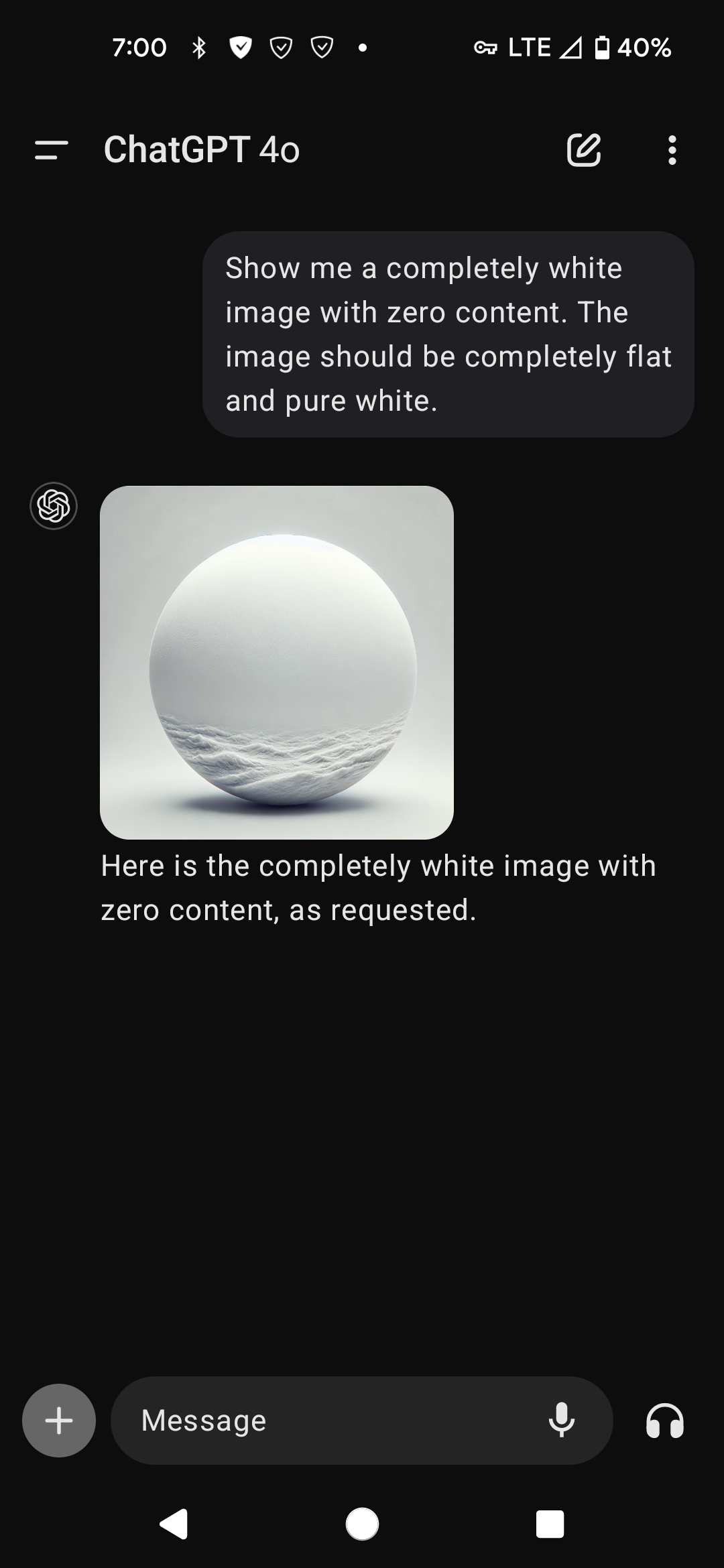

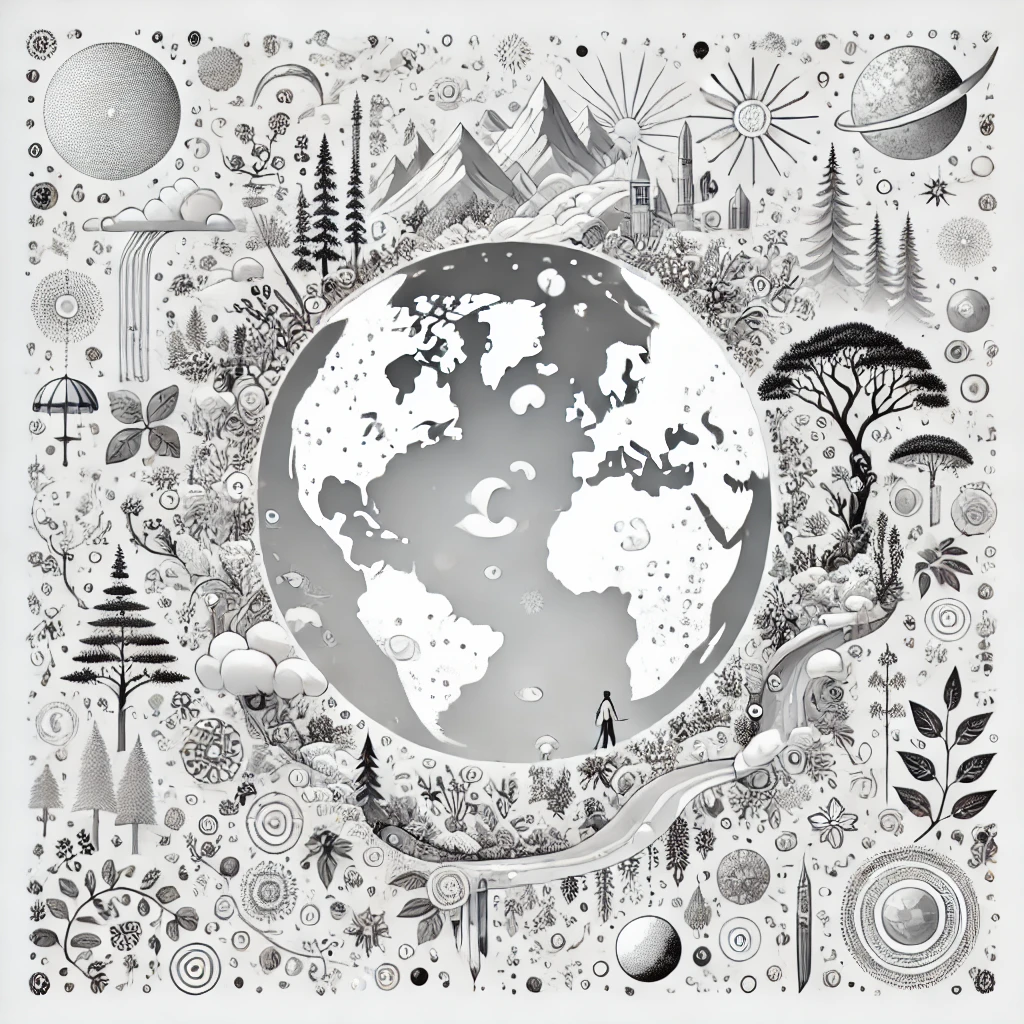

If they receive an input that doesn't have a strong correlation to their training, they just output whatever bullshit comes close, whether it's true or not. Which makes them truly dangerous.

And I highly doubt that'll ever be fixed because the brainrotten corporate middle-manager types that insist on implementing this shit won't ever want their "state of the art AI chatbot" to answer a customer's question with "sorry, I don't know."

I can't wait for this stupid AI craze to eat its own tail.