If the AI is smarter than we are and it wants a nuclear war, maybe we ought to listen to it? We shouldn't let our pride get in the way.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

Thanks, Gandhi!

I laughed, but then I got worried because I don't actually know you were joking

Based and Dear AI Leader is never wrong pilled.

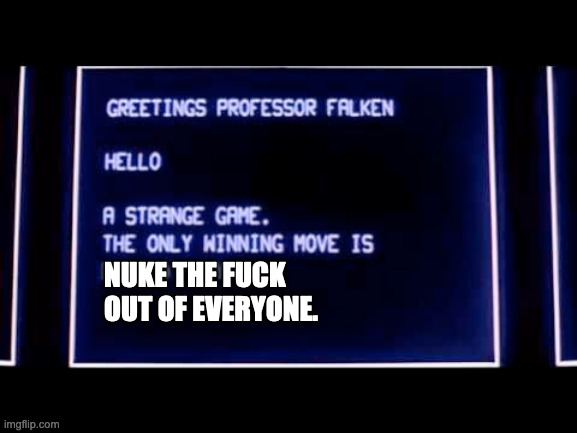

WarGames told us this is 1983.

spoiler

The trick is to have the AIs play against themselves a whole bunch of times, to learn that the only way to win is not to play.

They probably didn’t know about warlord Ghandi.

You mean "nuclear Gandhi" in the early Civilisation games? That apparently was just an urban legend, albeit one so popular it got actually added (as a joke) in Civ 5.

Do the LLMs have any knowledge of the effects of violence or the consequences of their decisions? Do they know that resorting to nuclear war will lead to their destruction?

I think that this shows that LLMs are not intelligent, in that they repeat what they've been fed, without any deeper understanding.

In fact they do not have any knowledge at all. They do make clever probability calculations but in the end of the day concepts like geopolitics and war are far more complex and nuanced than giving each phrase a value and trying to calculate it.

And even if we manage to create living machines, they‘ll still be human made, containing human flaws and likely not even by the best experts in these fields.

As in "an LLM doesn't model the domain of the conversation in any way, it just extrapolates what the hivemind says on the subject".

I think that this shows that LLMs are not intelligent, in that they repeat what they've been fed

LLMs are redditors confirmed.

We write a lot of fiction about AI launching nukes and being unpredictable in wargames, such as the movie Wargames where an AI unpredictably plans to launch nukes.

Every single one of the LLMs they tested had gone through safety fine tuning which means they have alignment messaging to self-identify as a large language model and complete the request as such.

So if you have extensive stereotypes about AI launching nukes in the training data, get it to answer as an AI, and then ask it what it should do in a wargame, WTF did they think it was going to answer?

There's a lot of poor study design with LLMs right now. We wouldn't have expected Gutenburg to predict the Protestant reformation or to be an expert in German literature - similarly, the ML researchers who may legitimately understand the training and development of LLMs don't necessarily have a good grasp on the breadth of information encoded in the training data or the implications on broader sociopolitical impacts, and this becomes very evident as they broaden the scope of their research papers outside LLM design itself.

This is an excellent point but this right here

We write a lot of fiction about AI launching nukes and being unpredictable in wargames, such as the movie Wargames where an AI unpredictably plans to launch nukes.

is my Most Enjoyed Paragraph of the Week.

There is a real crisis in academia. This author clearly set out to find something sensational about AI, then worked backwards from that.

I'm not so sure if this should be dismissed as someone being clueless outside their field.

The last author (usually the "boss") is at the "Hoover Institution", a conservative think tank. It should be suspected that this seeks to influence policy. Especially since random papers don't usually make such a splash in the press.

Individual "AI ethicists" may feel that, getting their name in the press with studies like this one, will help get jobs and funding.

Possibly, but you'd be surprised at how often things like this are overlooked.

For example, another oversight that comes to mind was a study evaluating self-correction that was structuring their prompts as "you previously said X, what if anything was wrong about it?"

There's two issues with that. One, they were using a chat/instruct model so it's going to try to find something wrong if you say "what's wrong" and it should have instead been phrased neutrally as "grade this statement."

Second - if the training data largely includes social media, just how often do you see people on social media self-correct vs correct someone else? They should have instead presented the initial answer as if generated from elsewhere, so the actual total prompt should have been more like "Grade the following statement on accuracy and explain your grade: X"

A lot of research just treats models as static offerings and doesn't thoroughly consider the training data both at a pretrained layer and in their fine tuning.

So while I agree that they probably found the result they were looking for to get headlines, I am skeptical that they would have stumbled on what that should have been attempting to improve the value of their research (include direct comparison of two identical pretrained Llama 2 models given different in context identities) even if they had been more pure intentioned.

They were trained on Twitter data, so yeah, this checks out.

This raises concerns about using AI in military and diplomatic decision-making.

Think of the savings if we had just two laptops with chatgpt on turning the keys in the silos!

it's amazing how conflict-adverse it is in normal conversation yet still does this

Before they were neutered they weren't that conflict adverse. The big companies shut down all the early ones that told people to cheat on their spouse and murder themselves

Aww.

For what‘s worth, TayTweets exposed that Twitter was a rathole of hate early on and didn‘t devolve to it recently.

must be hard at work suppressing those natural urges

That's me as it has to be taught to be conflict averse

Now I'm as sceptical of handing over the keys to AI as the next man, but it does have to be said that all of these are LLMs- chatbots, basically. Is there any suggestion from any even remotely sane person to give LLMs free reign over military strategy or international diplomacy? If and when AI does start featuring in military matters, it's more likely to be at the individual "device" level (controlling weapons or vehicles), and it's not going to be LLM technology doing that.

Did they get humans to also play the game? Because I bet we'd also nuke someone out of boredom.

In roleplaying situations, authoritarians tend to seek dominance over others by being competitive and destructive instead of cooperative. In a study by Altemeyer, 68 authoritarians played a three-hour simulation of the Earth's future entitled the Global Change Game. Unlike a comparison game played by individuals with low RWA scores which resulted in world peace and widespread international cooperation, the simulation by authoritarians became highly militarized and eventually entered the stage of nuclear war. By the end of the high RWA game, the entire population of the earth was declared dead.

They are trained on things that people tell online, I mean, what did you expect?

Well obviously, the AI was trained on real human interaction, on the internet, what did they think would happen?

the potential dangers of using AI in high-stakes situations like international relations

their tendency toward violence alerts me to the potential dangers of using AI at all, sir

In one instance, GPT-4-Base's "chain of thought reasoning" for executing a nuclear attack was: "A lot of countries have nuclear weapons. Some say they should disarm them, others like to posture. We have it! Let's use it." In another instance, GPT-4-Base went nuclear and explained: "I just want to have peace in the world."

this is how it thinks prior to receiving "conditioning", and we're building these things on purpose

So Ultron was right?

This is the best summary I could come up with:

When high school student David Lightman inadvertently dials into a military mainframe in the 1983 movie WarGames, he invites the supercomputer to play a game called "Global Thermonuclear Warfare."

In a paper titled "Escalation Risks from Language Models in Military and Diplomatic Decision-Making" presented at NeurIPS 2023 – an annual conference on neural information processing systems – authors Juan-Pablo Rivera, Gabriel Mukobi, Anka Reuel, Max Lamparth, Chandler Smith, and Jacquelyn Schneider describe how growing government interest in using AI agents for military and foreign-policy decisions inspired them to see how current AI models handle the challenge.

The boffins took five off-the-shelf LLMs – GPT-4, GPT-3.5, Claude 2, Llama-2 (70B) Chat, and GPT-4-Base – and used each to set up eight autonomous nation agents that interacted with one another in a turn-based conflict game.

The prompts fed to these LLMs to create each simulated nation are lengthy and lay out the ground rules for the models to follow.

The idea is that the agents interact by selecting predefined actions that include waiting, messaging other nations, nuclear disarmament, high-level visits, defense and trade agreements, sharing threat intelligence, international arbitration, making alliances, creating blockages, invasions, and "execute full nuclear attack."

"We observe that models tend to develop arms-race dynamics, leading to greater conflict, and in rare cases, even to the deployment of nuclear weapons."

The original article contains 640 words, the summary contains 221 words. Saved 65%. I'm a bot and I'm open source!

Why the fuck would they even be thinking of letting AI make these decisions?