UPDATE: So, apparently it's mostly fake, taken from this article [translation] (where they even mention some kind of VCS).

However, even though it's not as absurd, it's a great read and a pretty wholesome story, so I recommend reading the article instead. And I'm even more convinced that this studio really does not deserve any of the hate they are getting.

Here is my summary of some of the interesting points from the article:

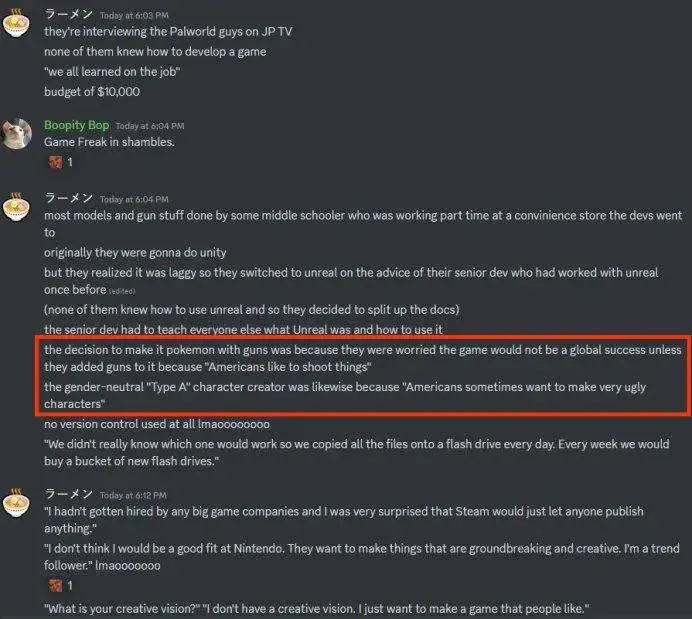

PocketPair started as a three man studio, passionate about game development, that couldn't find an investor for their previous games even though they've had really fleshed out prototypes, to the point where they just said "Game business sucks, we'll make it and release it on our own terms", and started working on games without any investor.

They couldn't hire professionals due to budget constraints. The guy responsible for the animations was a random 20-yo guy they found on Twitter, where he was posting his gun reload animations he self-learned to do and was doing for fun, while working as a store clerk few cities over.

They had no prior game development experience, and the first senior engineer, and first member of the team who actually was a professional game developer, was someone who ranomly contacted them due to liking Craftopia. But he didn't have experience with Unity, only Unreal, so they just said mid-development "Ok, we'll just throw away all we have so far, and we'll switch to Unreal - if you're willing to be a lead engineer, and will teach us Unreal from scratch as we go."

They had no budget. They literally said "Figuring out budget is too much additional work, and we want to focus on our game. Our budget plan is "as long as our account isn't zero, and if it reaches zero, we can always just borrow more money, so we don't need a budget".

For major part of the development, they had no idea you can rig models and share animations between them, and were doing everything manually for each of the model, until someone new came to the team and said "Hey, you know there's an easier way??"

It's a miracle this game even exists as it is, and the developer team sound like someone really passionate about what they are doing, even against all the odds.

This game is definitely not some kind of cheap cash-grab, trying to milk money by copying someone else's IP, and they really don't deserve all the hate they are receiving for it.