this post was submitted on 01 May 2025

-111 points (12.8% liked)

Technology

69804 readers

3385 users here now

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Is environmental impact on the top of anyones list for why they don't like ChatGPT? It's not on mine nor on anyones I have talked to.

The two most common reasons I hear are 1) no trust in the companies hosting the tools to protect consumers and 2) rampant theft of IP to train LLM models.

The author moves away from strict environmental focus despite claims to the contrary in their intro,

[...]

... yet doesn't address the most common criticisms.

Worse, the author accuses anyone who pauses to think of the negatives of ChatGPT of being absurdly illogical.

IDK what logical fallacy this is but claiming people are "freaking out over 3Wh" is very disingenuous.

Rating as basic content: 2/10, poor and disingenuous argument

Rating as example of AI writing: 5/10, I've certainly seen worse AI slop

My reason is that you can't trust the answers regardless. Hallucinations are a rampant problem. Even if we managed to cut it down to 1/100 query will hallucinate, you can't trust ANYTHING. We've seen well trained and targeted AIs that don't directly take user input (so can't be super manipulated) in google search results recommending that people put glue on their pizzas to make the cheese stick better... or that geologists recommend eating a rock a day.

If a custom tailored AI can't cut it... the general ones are not going to be all that valuable without significant external validation/moderation.

Basically no. What you're calling tailored AI is actually low cost AI. You'll be hard pressed, on the other hand, to get ChatGPT o3 to hallucinate at all

No, not basically no.

https://mashable.com/article/openai-o3-o4-mini-hallucinate-higher-previous-models

Stop spreading misinformation. The company itself acknowledges that it hallucinates more than previous models.

I stand corrected thank you for sharing

I was commenting based on anecdotal experience and I didn't know where was a test specifically for this

I do notice that o3 is more overconfident and tends to find a source online from some forum and treat it as gospel

Which, while not correct, I would not treat as hallucination

There is also the argument that a downpour of AI generated slop is making the Internet in general less usable, hurting everyone (except the slop makers) by making true or genuine information harder to find and verify.

What exactly is the argument?

Thank you for your considered and articulate comment

What do you think about the significant difference in attitude between comments here and in (quite serious) programming communities like https://lobste.rs/s/bxixuu/cheat_sheet_for_why_using_chatgpt_is_not

Are we in different echo chambers? Is chatgpt a uniquely powerful tool for programmers? Is social media a fundamentally Luddite mechanism?

I'm curious if you can articulate the difference between being critical of how a particular technology is owned and managed versus being a Luddite?

I think I'm on board with arguing against how LLMs are being owned and managed, so I don't really have much to say

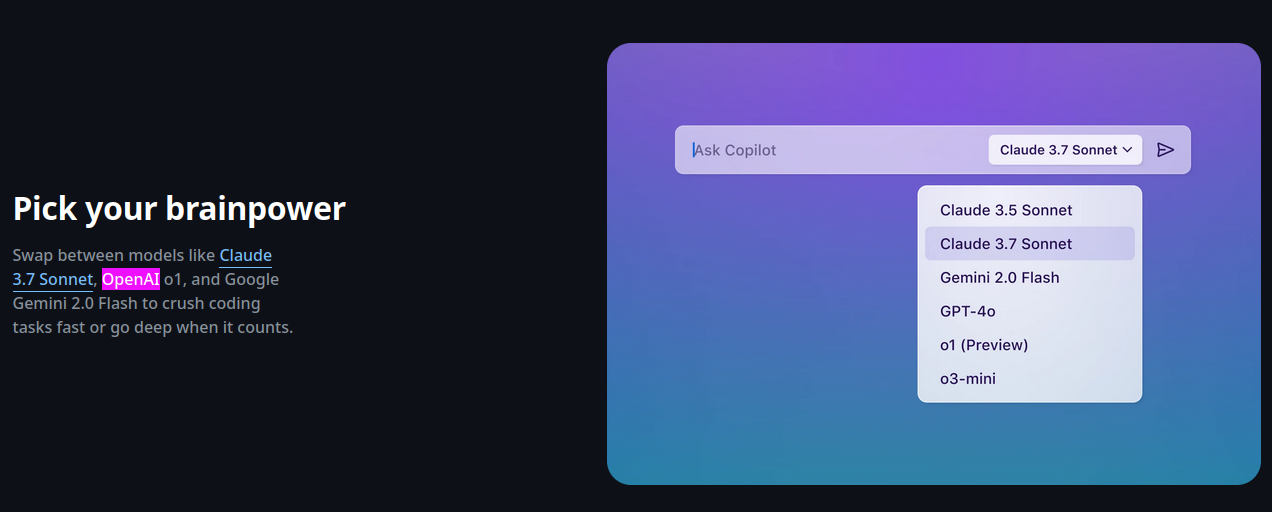

I would say GitHub copilot ( that uses a gpt model ) uses more Wh than chatgpt, because it gets blasted more queries on average because the "AI" autocomplete just triggers almost every time you stop typing or on random occasions.

I don't think this answers the question

They're specifically showing you that in the use case you asked about the assertions must change. Your question is bad for the case that you're specifically asking about.

So no, it doesn't answer the question... But your question has a bunch more caveats that must be accounted for that you're just straight up missing.

No that is not how reasoned debate works, you have to articulate your argument lest you're just sloppily babbling talking points

If the premise of your argument is fundamentally flawed, then you're not having a reasoned debate. You just a zealot.

Please articulate why the premise of my argument is fundamentally flawed

They did... You just refuse to acknowledge it. It's no longer a discussion of simply 3Wh when GitHub copilot is making queries every time you pause typing. It could easily equate to hundreds or even thousands of queries a day (if not rate limited). That fully changes the scope of the argument.

GitHub copilot is not chatgpt

Yet again... You fundamentally have the wrong answer...

https://en.wikipedia.org/wiki/GitHub_Copilot

https://github.com/features/copilot

GitHub copilot was literally developed WITH OpenAI the creators of ChatGPT... and you can run o1, o3, o4 directly in there.

https://docs.github.com/en/copilot/using-github-copilot/ai-models/changing-the-ai-model-for-copilot-code-completion

It defaults to 4o mini.

Thank you

None of this was true of copilot for years, but I stand corrected as for the current state of affairs