LocalLLaMA

Welcome to LocalLLaMA! Here we discuss running and developing machine learning models at home. Lets explore cutting edge open source neural network technology together.

Get support from the community! Ask questions, share prompts, discuss benchmarks, get hyped at the latest and greatest model releases! Enjoy talking about our awesome hobby.

As ambassadors of the self-hosting machine learning community, we strive to support each other and share our enthusiasm in a positive constructive way.

Rules:

Rule 1 - No harassment or personal character attacks of community members. I.E no namecalling, no generalizing entire groups of people that make up our community, no baseless personal insults.

Rule 2 - No comparing artificial intelligence/machine learning models to cryptocurrency. I.E no comparing the usefulness of models to that of NFTs, no comparing the resource usage required to train a model is anything close to maintaining a blockchain/ mining for crypto, no implying its just a fad/bubble that will leave people with nothing of value when it burst.

Rule 3 - No comparing artificial intelligence/machine learning to simple text prediction algorithms. I.E statements such as "llms are basically just simple text predictions like what your phone keyboard autocorrect uses, and they're still using the same algorithms since <over 10 years ago>.

Rule 4 - No implying that models are devoid of purpose or potential for enriching peoples lives.

view the rest of the comments

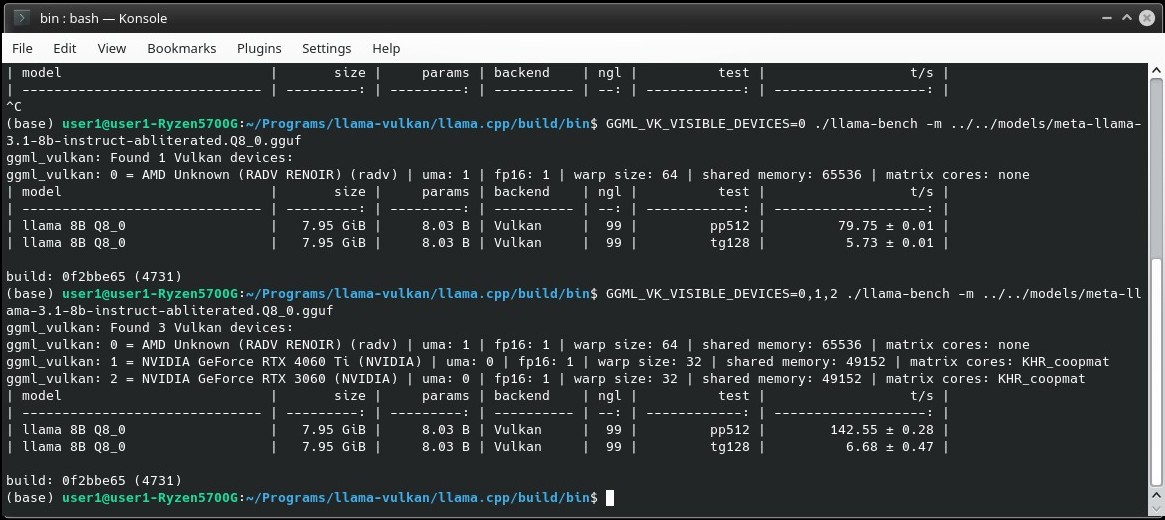

Is BLAS faster with CPU only than Vulkan with CPU+iGPU? After failing to make work the SYCL backend in llama.cpp apparently because of a Debian driver issue I ended up using the Vulkan backend but after many tests offloadding to the iGPU doesn't seem to make much difference.

Uh, that's a complicated question. I don't know whether BLAS or Vulkan or SyCL are faster on an iGPU. I think I read many different takes on that. And I suppose it probably changed since I last tested it. People are optimizing the code all the time and it probably also depends on the processor generation and things like that. All I can say setting up SyCL is a hassle and requires like 10GB of development libraries. And I didn't see any noticeable improvement in speed. Either I did something wrong or it's not worth it on my computer. And Vulkan made everything slower on my 8th generation laptop's iGPU. But I'm not sure if that applies generally. But I'm currently sticking to the default backend, I believe that's BLAS. But again on KoboldCPP they replaced OpenBLAS with NoBLAS(?) recently and I haven't kept up to date and it's just too many options... 😅 I don't have any good advice. Maybe try all the options and see which is the fastest... Seems to me using the iGPU likely makes it slower, not faster.