this post was submitted on 21 Sep 2024

52 points (79.5% liked)

Asklemmy

48631 readers

596 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

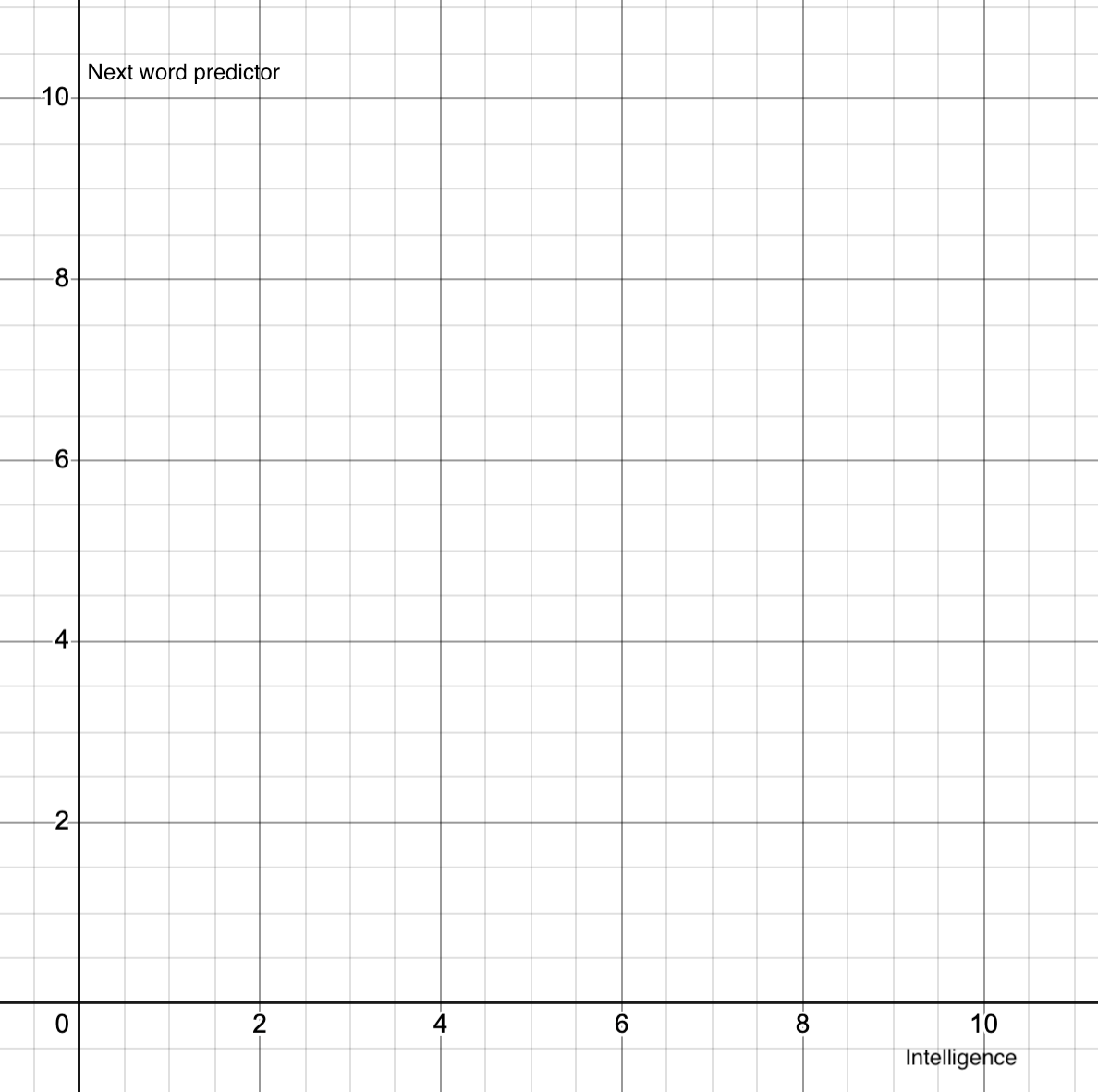

That's literally how llma work, they quite literally are just next word predictors. There is zero intelligence to them.

It's literally a while token is not "stop", predict next token.

It's just that they are pretty good at predicting the next token so it feels like intelligence.

So on your graph, it would be a vertical line at 0.

What is intelligence though? Maybe I'm getting through life just by being pretty good at predicting what to say or do next...

yeah yeah I've heard this argument before. "What is learning if not like training." I'm not going to define it here. It doesn't "think". It doesn't have nuance. It is simply a prediction engine. A very good prediction engine, but that's all it is. I spent several months of unemployment teaching myself the ins and outs, developing against llms, training a few of my own. I'm very aware that it is not intelligence. It is a very clever trick it pulls off, and easy to fool people that it is intelligence - but it's not.

But how do you know that the human brain is not just a super sophisticated next-thing predictor that by being super sophisticated manages to incorporate nuance and all that stuff to actually be intelligent? Not saying it is but still.

Because we have reason, understanding. Take something as simple as the XY problem. Humans understand that there are nuances to prompts and questions. I like the XY because a human knows to step back and ask "what are you really trying to do?". AI doesn't have that capability, it doesn't have reasoning to say "maybe your approach is wrong".

So, I'm not the one to define what it is or on what scale. But I can say that it's not human intelligence.

Agreed

This is true if you describe a pure llm, like gpt3

However systems like claude, gpt4o and 1o are far from just a single llm, they are a blend of tailored llms, machine learning some old fashioned code to weave it all together.

Op does ask “modern llm” so technically you are right but i believed they did mean the more advanced “products”

Though i would not be able to actually answer ops questions, ai is hard to directly compare with a human.

In most ways its embarrassingly stupid, in other it has already surpassed us.

That is just next word prediction with extra steps.

Now that is fair.

None of which are intelligence, and all of which are catered towards predicting the next token.

All the models have a total reliance on data and structure for inference and prediction. They appear intelligent but they are not.

How is good old fashioned code comparing outputs to a database of factual knowledge “predicting the next token” to you. Or reinforcement relearning and token rewards baked into models.

I can tell you have not actually tried to work with professional ai or looked at the research papers.

Yes none of it is “intelligent” but i would counter that with neither are human beings, we dont even know how to define intelligence.

No, unfortunately you are wrong.

Gpt4 is a better version of gpt3.

The brand new one that is allegedly "unhackable" just has a role hierarchy providing rules and that hasn't been fulled tested in the wild yet.

First, did you read even the research papers?

Secondly, none are out that are actually immune to jailbreaking lol, Where did that claim come from?

Gpt4 is just an llm. Indeed the better version of gpt3

Gpt4o and 1o (claude-sonnet possibly also) rely on the generative capacities of the gpt4 model but there is allot more going under the hood that is not simply “generate the next token”

We all agree that a pure text predictor are not at all intelligent.

The discussion at hand is wether the current frontier of ai has moved the needle up. And i still would call it pretty dumb, but moving that needle, it did. Somewhere around (x2y0.5) if i have to use the meme. Stating its (0,0) just means people aren’t interested enough to pay attention, that these aren’t just llm anymore. That’s their right but i prefer people stopped joining the discussion so uninformed.