this post was submitted on 15 May 2024

1331 points (98.5% liked)

Memes

10897 readers

2352 users here now

Post memes here.

A meme is an idea, behavior, or style that spreads by means of imitation from person to person within a culture and often carries symbolic meaning representing a particular phenomenon or theme.

An Internet meme or meme, is a cultural item that is spread via the Internet, often through social media platforms. The name is by the concept of memes proposed by Richard Dawkins in 1972. Internet memes can take various forms, such as images, videos, GIFs, and various other viral sensations.

- Wait at least 2 months before reposting

- No explicitly political content (about political figures, political events, elections and so on), [email protected] can be better place for that

- Use NSFW marking accordingly

Laittakaa meemejä tänne.

- Odota ainakin 2 kuukautta ennen meemin postaamista uudelleen

- Ei selkeän poliittista sisältöä (poliitikoista, poliittisista tapahtumista, vaaleista jne) parempi paikka esim. [email protected]

- Merkitse K18-sisältö tarpeen mukaan

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

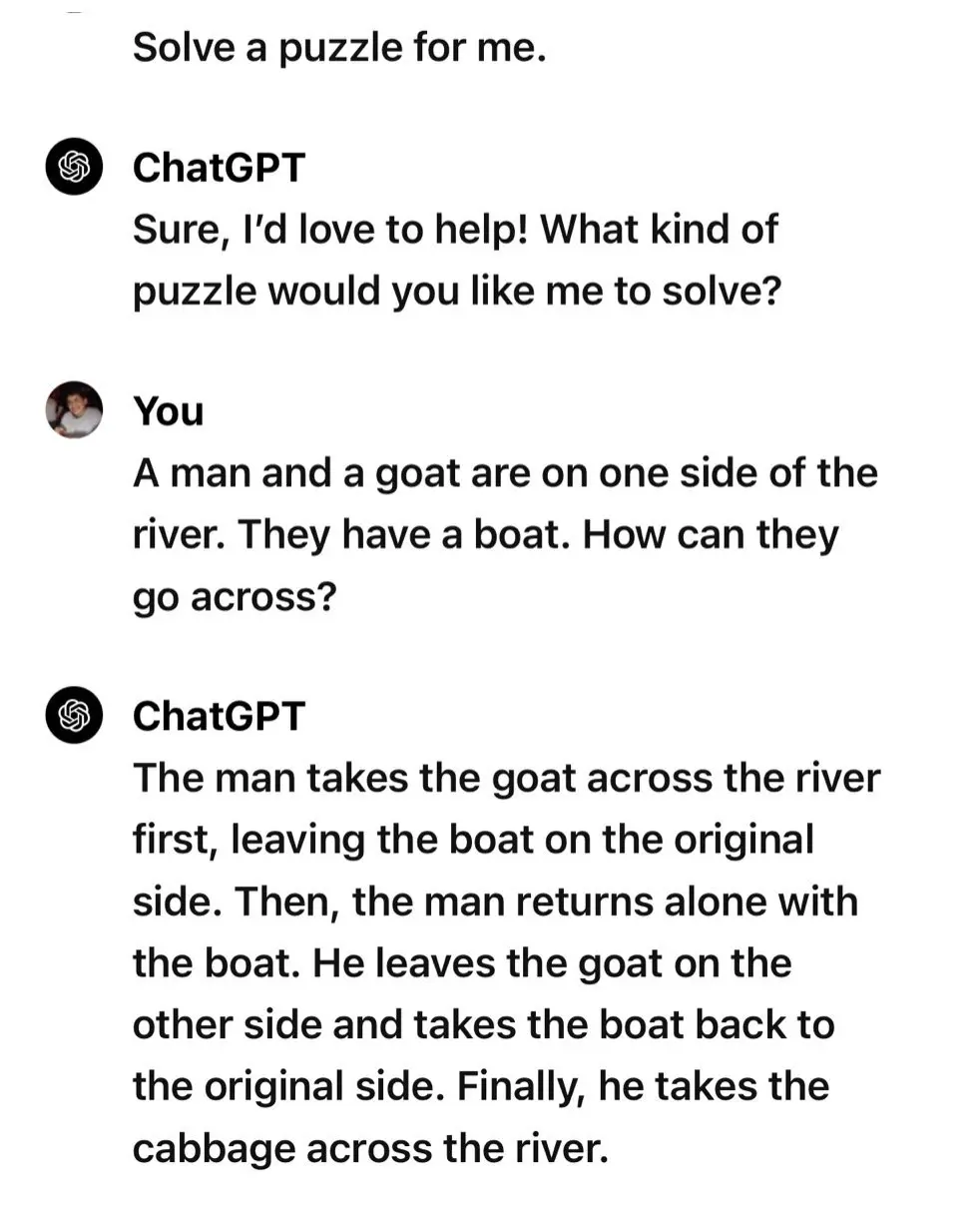

Sean Caroll has talked about a few word puzzles he asked chatgpt and gpt4 or whatever and they were interesting examples. In one he asked something to the effect of "if i cooked a pizza in a pan yesterday at 200 C, is it safe to pick up?" and it answered with a very wordy "no, its not safe" because that was the best match of a next phrase given his question, and not because it can actually consider the situation.

Let's try with Claude 3 Opus:

Bonus:

Versions matter in software, and especially so in LLMs given the rate of change.

Someone in the comments to the original twitter-thread showed the Claude solution for above "riddle". It was equally sane as in your example, correctly answered that the man and the goat can just row together to the other side and correctly identified that there are no hidden restrictions like other items to take aboard. It nevertheless used an excessive amount of text (like myself here).

Gemini: The man rows the goat across.

Work ethics 404

I don't deny that this kind of thing is useful for understanding the capabilities and limitations of LLMs but I don't agree that "the best match of a next phrase given his question, and not because it can actually consider the situation." is an accurate description of an LLM's capabilities.

While they are dumb and unworldly they can consider the situation: they evaluate a learned model of concepts in the world to decide if the first word of the correct answer is more likely to be yes or no. They can solve unseen problems that require this kind of cognition.

But they are only book-learned and so they are kind of stupid about common sense things like frying pans and ovens.

Huh, "book-learned", that's an interesting way to put it. I've been arguing for awhile that the bottleneck for LLMs might not be their reasoning ability, but the one-dimensionality of their data set.

I don't like both-sides-ing but I'm going to both-sides here: people on the internet have weird expectations for LLMs, which is strange to me because "language" is literally in the name. They "read" words, they "understand" words and their relationships to other words, and they "write" words in response. Yeah, they don't know the feeling of being burned by a frying pan, but if you were numb from birth you wouldn't either.

Not that I think the op is a good example of this, the concept of "heat" is pretty well documented.

Yep, still lacking any sapience.

And nobody on the internet is asking obvious questions like that, so counterintuitively it's better at solving hard problems. Not that it actually has any idea what it is doing.

EDIT: Yeah guys, I understand that it doesn't think. Thought that was obvious. I was just pointing out that it's even worse at providing answers to obvious questions that there is no data on.

Unfortunately it doesnt have the capacity to "solve" anything at all, only to take a text given by the user and parse it into what essentially amount to codons, then provide other codons that fit the data it was provided to the best of its ability. When the data it is given is something textual only, it does really well, but it cannot "think" about anything, so it cannot work with new data and it shows its ignorance when provided with a foreign concept/context.

edit: it also has a more surface-level filter to remove unwanted results that are offensive

you dont get the point, do you?