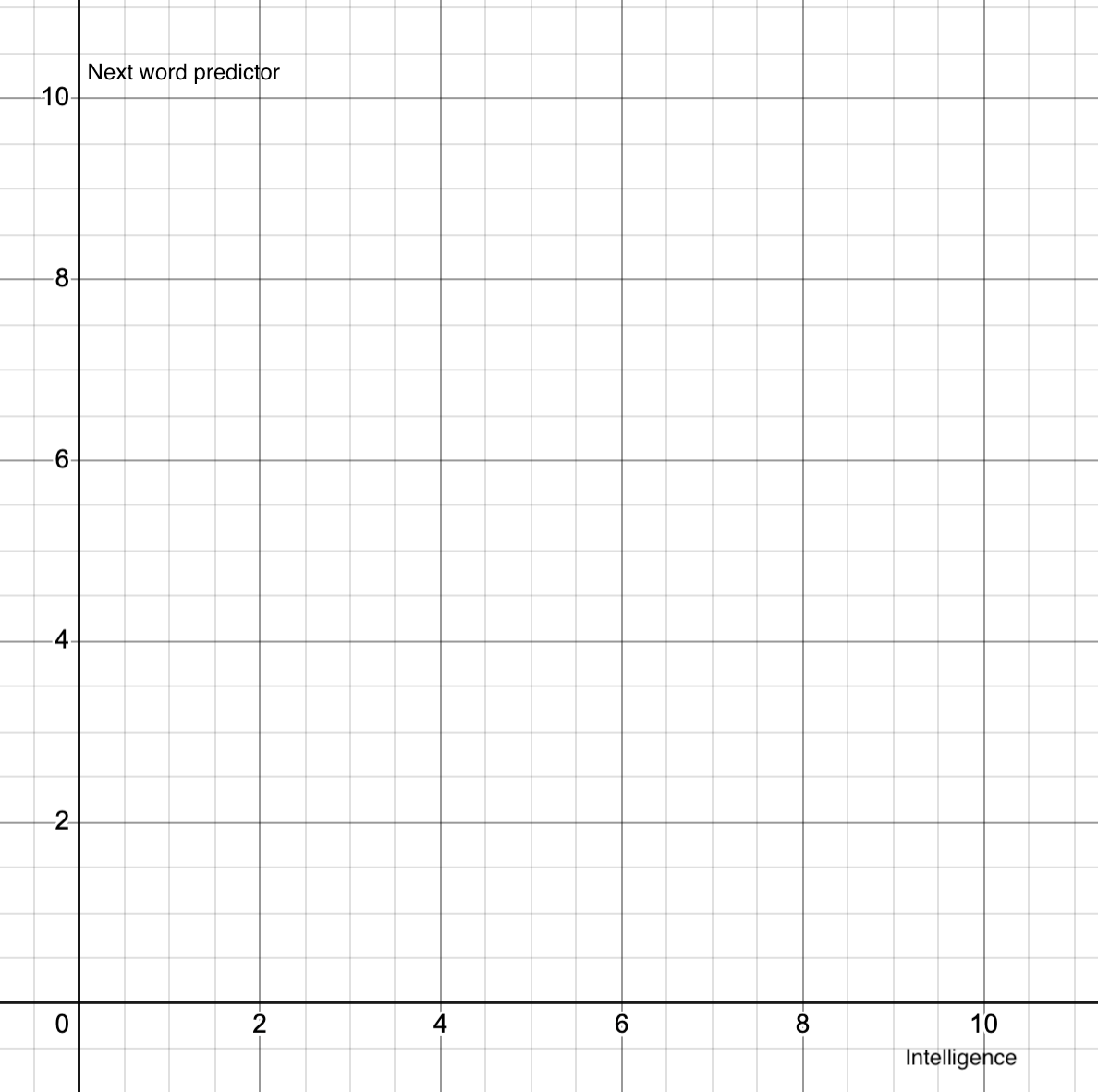

I'm going to say x=7, y=10. The sum x+y is not 10, because choosing the next word accurately in a complex passage is hard. The x is 7, just based on my gut guess about how smart they are - by different empirical measures it could be 2 or 40.

this post was submitted on 21 Sep 2024

52 points (79.5% liked)

Asklemmy

47663 readers

1472 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 6 years ago

MODERATORS

I hold a very strong hypothesis, which I’ve not seen any data contradict yet, that intelligence is only possible with formal language and symbolics and therefore formal language and intelligence is very hard to separate. I don’t think one created the other; they evolved together.

Yeah I think the human brain is a vehicle for "mind virus" which is script and ideas.

With GPT o1, I think there is a very small piece of intelligence at play, but it's basically (8.5, 1.5) on this in my mind