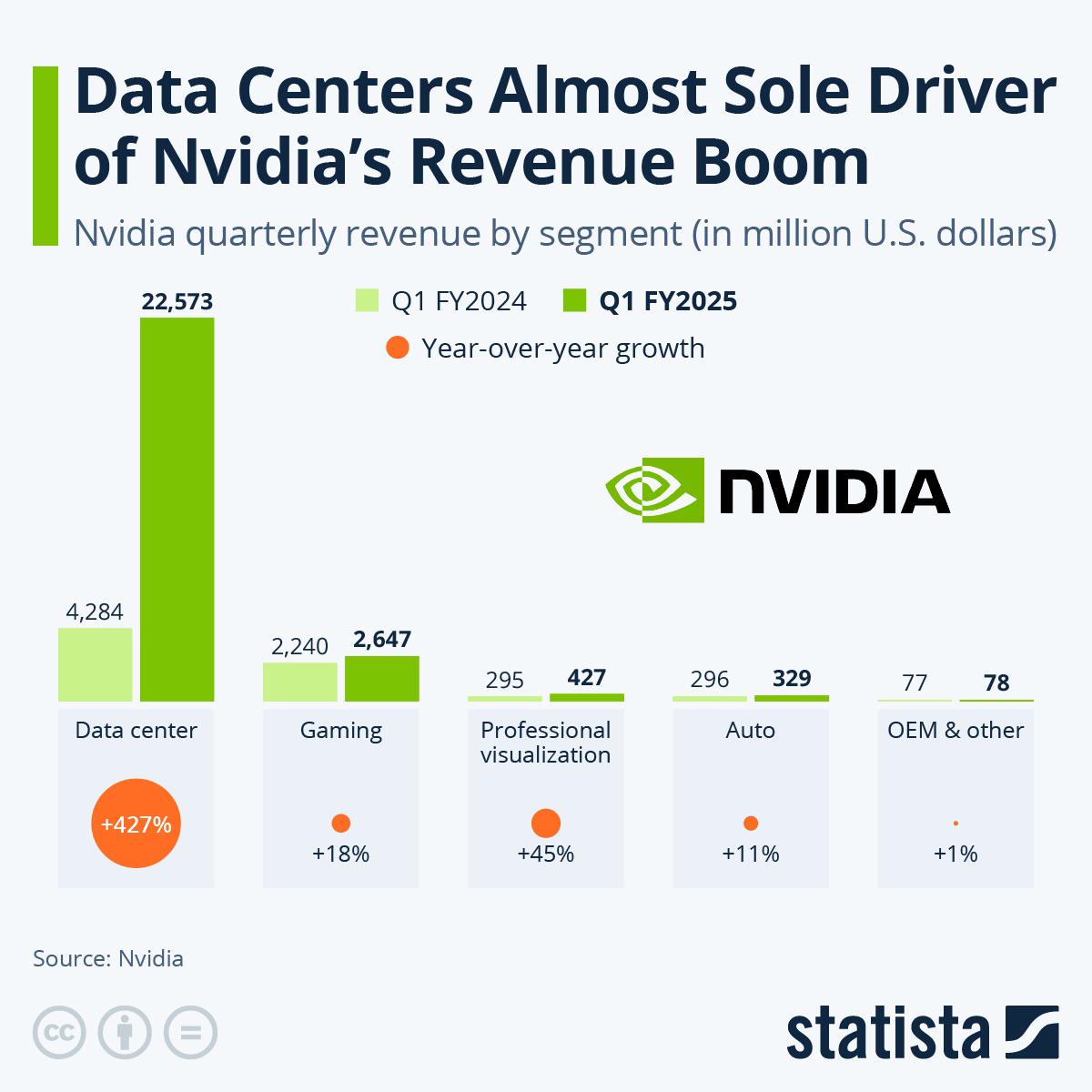

So here's the way I see it; with Data Center profits being the way they are, I don't think Nvidia's going to do us any favors with GPU pricing next generation. And apparently, the new rule is Nvidia cards exist to bring AMD prices up.

So here's my plan. Starting with my current system;

OS: Linux Mint 21.2 x86_64

CPU: AMD Ryzen 7 5700G with Radeon Graphics (16) @ 4.673GHz

GPU: NVIDIA GeForce RTX 3060 Lite Hash Rate

GPU: AMD ATI 0b:00.0 Cezanne

GPU: NVIDIA GeForce GTX 1080 Ti

Memory: 4646MiB / 31374MiB

I think I'm better off just buying another 3060 or maybe 4060ti/16. To be nitpicky, I can get 3 3060s for the price of 2 4060tis and get more VRAM plus wider memory bus. The 4060ti is probably better in the long run, it's just so damn expensive for what you're actually getting. The 3060 really is the working man's compute card. It needs to be on an all-time-greats list.

My limitations are that I don't have room for full-length cards (a 1080ti, at 267mm, just barely fits), also I don't want the cursed power connector. Also, I don't really want to buy used because I've lost all faith in humanity and trust in my fellow man, but I realize that's more of a "me" problem.

Plus, I'm sure that used P40s and P100s are a great value as far as VRAM goes, but how long are they going to last? I've been using GPGPU since the early days of LuxRender OpenCL and Daz Studio Iray, so I know that sinking feeling when older CUDA versions get dropped from support and my GPU becomes a paperweight. Maxwell is already deprecated, so Pascal's days are definitely numbered.

On the CPU side, I'm upgrading to whatever they announce for Ryzen 9000 and a ton of RAM. Hopefully they have some models without NPUs, I don't think I'll need them. As far as what I'm running, it's Ollama and Oobabooga, mostly models 32Gb and lower. My goal is to run Mixtral 8x22b but I'll probably have to run it at a lower quant, maybe one of the 40 or 50Gb versions.

My budget: Less than Threadripper level.

Thanks for listening to my insane ramblings. Any thoughts?

Uhm, hm? Does this have anything to do with the post or my comment orrrrr is your message random token generation?

Oh yes. You replied to a 5mo old comment on ai text. So I thought the Gandalf meme was suitable and I expanded on what was said. If you've never used silly tavern it's a great interface.

But now you got me wondering are you a cylon?

Ooh I thought silly tavern was something completely unrelated! I have not heard of it, but now I'll try hook it up to ollama.

I don't think I'm a cylon... Again, I'm probably missing something, but online i just see these guys when looking for that word.

So no, I'm not some aggressive killer robot and I'm also not cyclon (which i searched for at first, misreading your message)

Is cylon another LLM related word, or something completely different?

<im_end>

Just kidding, not a bot.

Haha yeah that's first gen cylons.

In the newer show they can be sleeper agents that are identical to humans and can even think they are real. The newer show is pretty good if you want to watch a tv series that works like among us.

https://www.rottentomatoes.com/tv/battlestar-galactica

This guy has some pretty strong story telling models if you find yours isn't doing well.

https://huggingface.co/TheDrummer