I just stop my containers and tar gzip their compose files, their volumes and the /etc folder on the host

Assuming you have all of them under a folder, I just run this lol

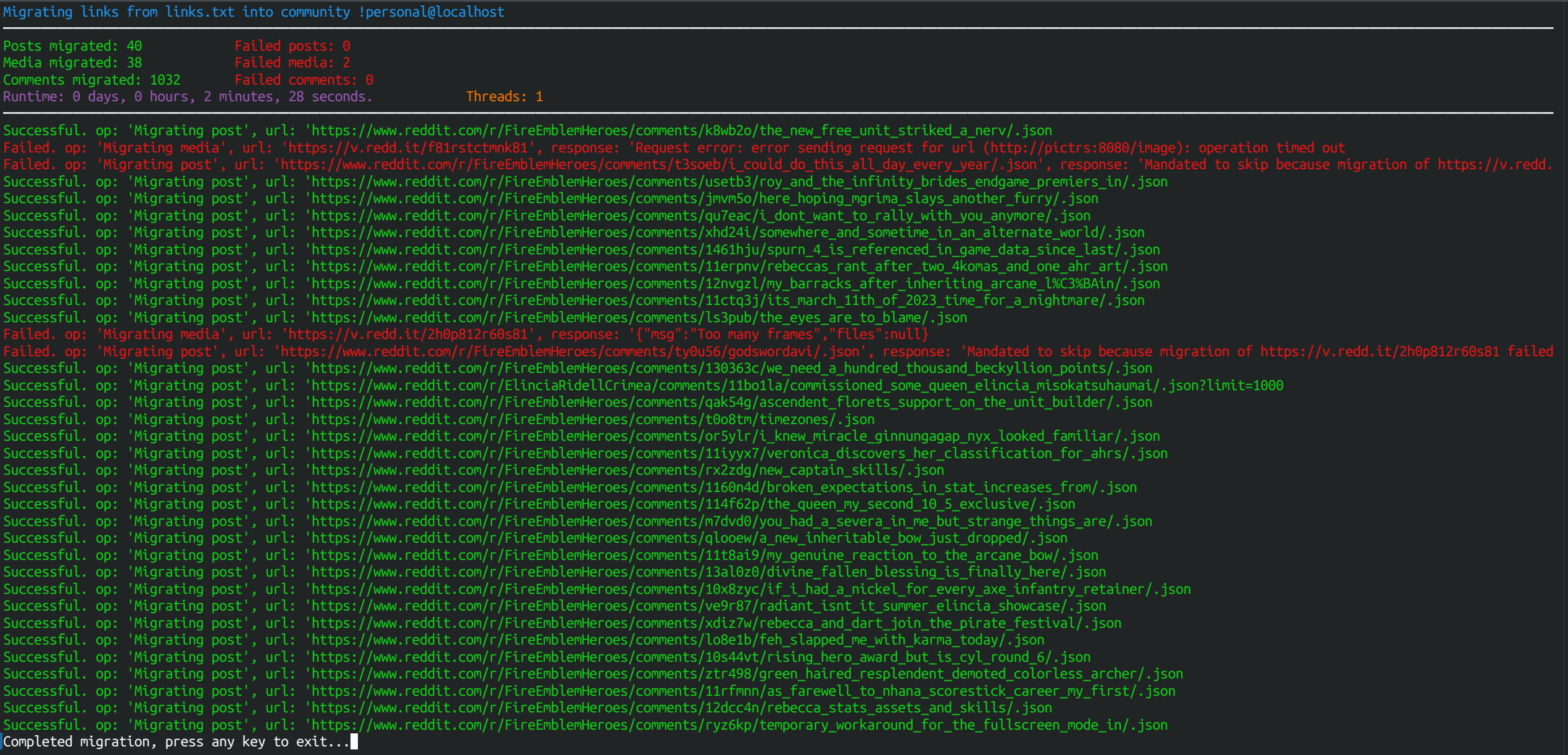

for f in *; do

echo "$f";

git -C "$f" pull;

git -C "$f" submodule update --recursive --remote;

echo "";

echo "#########################################################################";

echo "";

done

For archival purposes software encoding is always more efficient size wise.

I am also waiting for an Arc to arrive to plug into my jellyfin box.

Hardware encoding is fast yeah but wont save me disk space.

Still not sure whether I will upgrade to 9900x or 9700x from my 3700x

I'm recently:

- Doing a lot of av1 software encoding at low presets

- Not trusting yet of rocm future

So going harder on the stronger CPU rather than an expensive the GPU seems to be the answer for me. If I gamble on proper rocm support for some AI workloads and fail at least I could run some casual stuff using the CPU device.

From what I understand its still restrained by the server in real time so downloading a 2 hour movie would still take two hours 🥲

Yeah, I have been eyeing upgrades to get avx512 anyway because lately I have been doing very heave very low preset av1 encodes but when they are a dick about it I just feel like postponing it.

lol for the past 15 years I have "rebuilt" my desktop every 5 years but I didn't expect the would try to force me out of my 7 3700x right on the date

I am an arch linux user btw

Your connection is 40MB/s I assume

5e is capable of getting the full 1Gbps of my connection so I easily see over 90MB/s. That being said I bought a big 100m bulk years ago and have been clipping it myself with care.

If you were indeed using leftover/ free cables of cuestionable quality it indeed could be a reason for poor perfomance

I'm honestly in the market to build a new pc and leaving the one with my 7 3700X as a secondary.

Originally I was planning to get more cores but honestly staying at the lower wattage might be better.

As someone who only codes solutions for himself I don't relate. All the extra time I would spend writting a C solution it would never attone the runtime loses of doing it in maybe python.

The G in LLM stands for Girlfriend